What B2B search must do — and where many vendors fall short

B2B buyers don’t browse. They don’t get inspired. They arrive at your site with a part number, a SKU, a contract reference, or a manufacturer code in hand. The whole act of “search” in B2B is fundamentally different from B2C — and treating them as the same problem produces platforms that demo well and operate poorly.

So what does B2B search actually have to do? And how do today’s platforms perform against that bar?

The Three Cs of B2B Search

There are three jobs B2B search has to perform, in increasing order of difficulty:

- Confirm — match the specification

- Connect — translate the specification to your catalog

- Complete — surface what the specification implies

These aren’t platform tiers. They’re buyer-intent tiers. Every B2B site faces all three, every day. The variance is in how well each platform — and each underlying catalog — handles them.

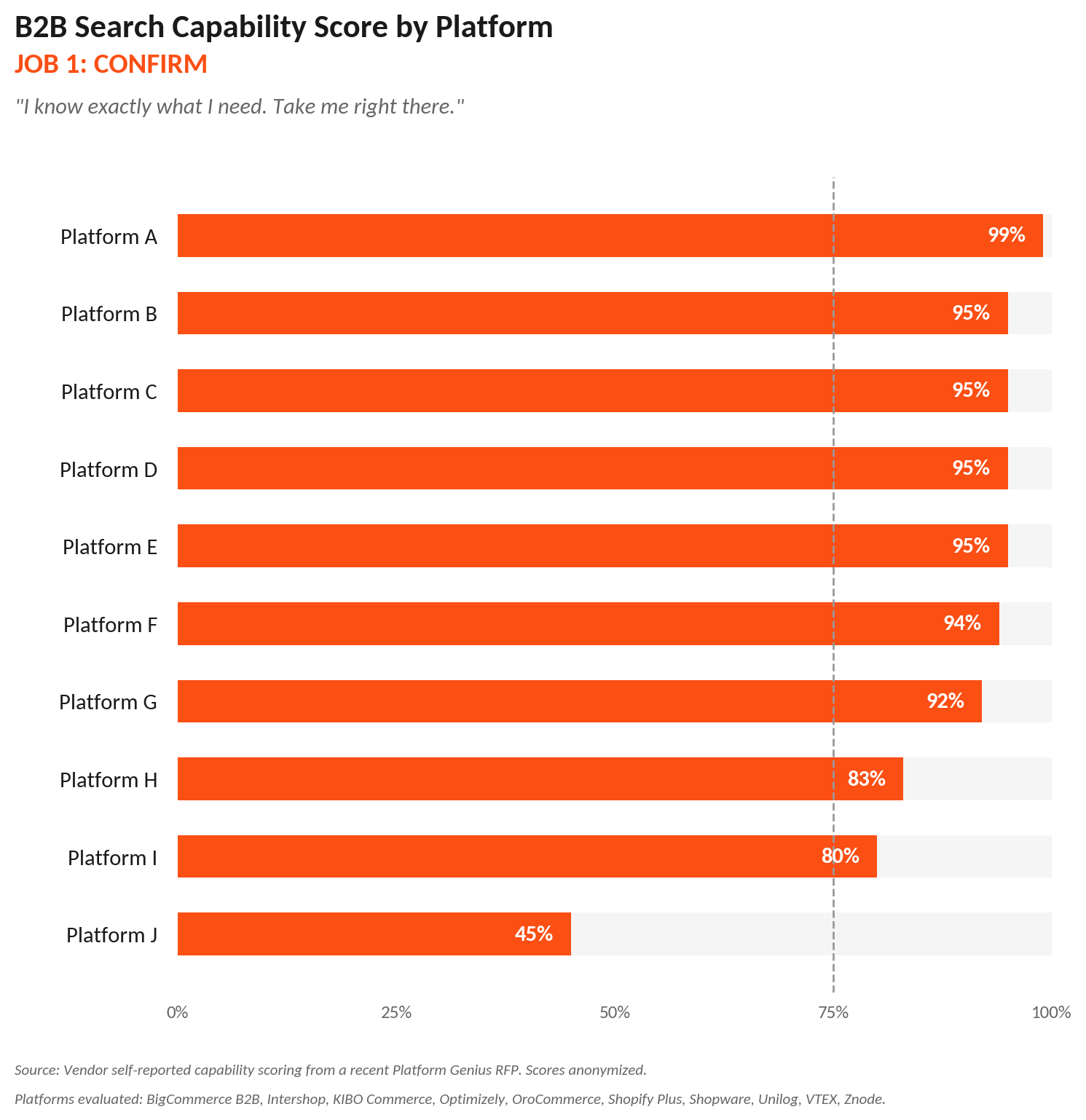

Job 1: Confirm

“I know exactly what I need. Take me right there.”

Buyer intent. The buyer has a known specification. They’re not exploring; they’re transacting. Speed is the only thing that matters.

How they’re searching. Part numbers, SKUs, manufacturer codes, partial identifiers, predictable misspellings of any of the above.

What the platform must do. Type-ahead, exact-match, partial-match, fuzzy-match, basic facets, sort by price, availability, or relevance. Get out of the way.

What the data must support. Clean product identifiers. SKUs consistent across sources. Part numbers normalized. Misspellings and variants handled in indexing. Sounds basic, but most distributors have legacy hygiene problems that show up here. A platform with an excellent search engine indexing dirty data will fail Job 1 — and the buyer blames the platform when the actual problem is the data.

What the scores reveal. Confirm is mostly a solved problem. Most platforms in the cohort score above 90%. Two score notably lower — and in both cases, foundational capabilities are delivered through partner integrations rather than native features. When a platform can’t reliably confirm on its own, no third-party search vendor patches that gap cleanly.

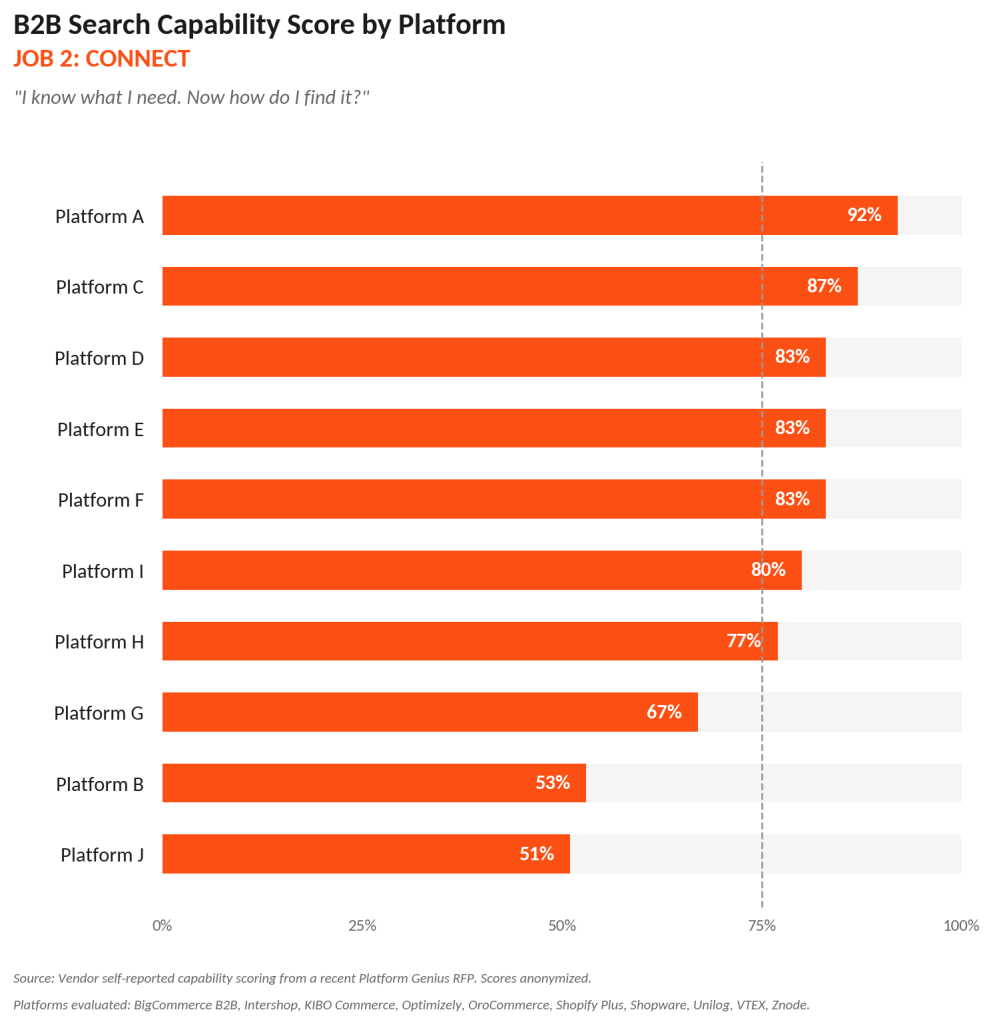

Job 2: Connect

“I know what I need. How do I find it?”

Buyer intent. The buyer has a specification, but it’s in their language, not yours. Translation work begins. They know what they want; they don’t know what you call it.

How they’re searching. Competitor part numbers expecting your equivalent. Customer-specific part numbers from their contract or PO system. Manufacturer codes when your catalog organizes by internal SKU. Technical specifications — “1/4 inch brass NPT” — when your catalog uses model identifiers. Alternate part numbers across legacy systems that never got reconciled.

What the platform must do. Maintain cross-references between your SKUs and external identifiers. Surface customer-specific part numbers based on account context. Index technical attributes deeply enough that spec-based search returns relevant results. Enable saved and recent searches, because B2B buyers rerun queries every month. Weight relevance based on what’s actually being purchased, not just keyword frequency.

What the data must support. A multi-vocabulary catalog. You maintain cross-references between your SKUs and competitor part numbers, manufacturer codes, customer-specific part numbers from contracts, alternate identifiers from legacy systems, and technical attributes structured deeply enough to be searchable. This is the heaviest data lift in B2B and the place most distributors are weakest. No platform — and no third-party search vendor — can translate specifications it has no cross-reference data for.

What the scores reveal. This is where platforms separate. Connect is the most important job in B2B search and the one platforms most quietly ignore in their marketing. Half the cohort delivers these capabilities natively; the other half requires configuration, partner integration, or custom development — and the buyers paying for those platforms often don’t discover that until implementation.

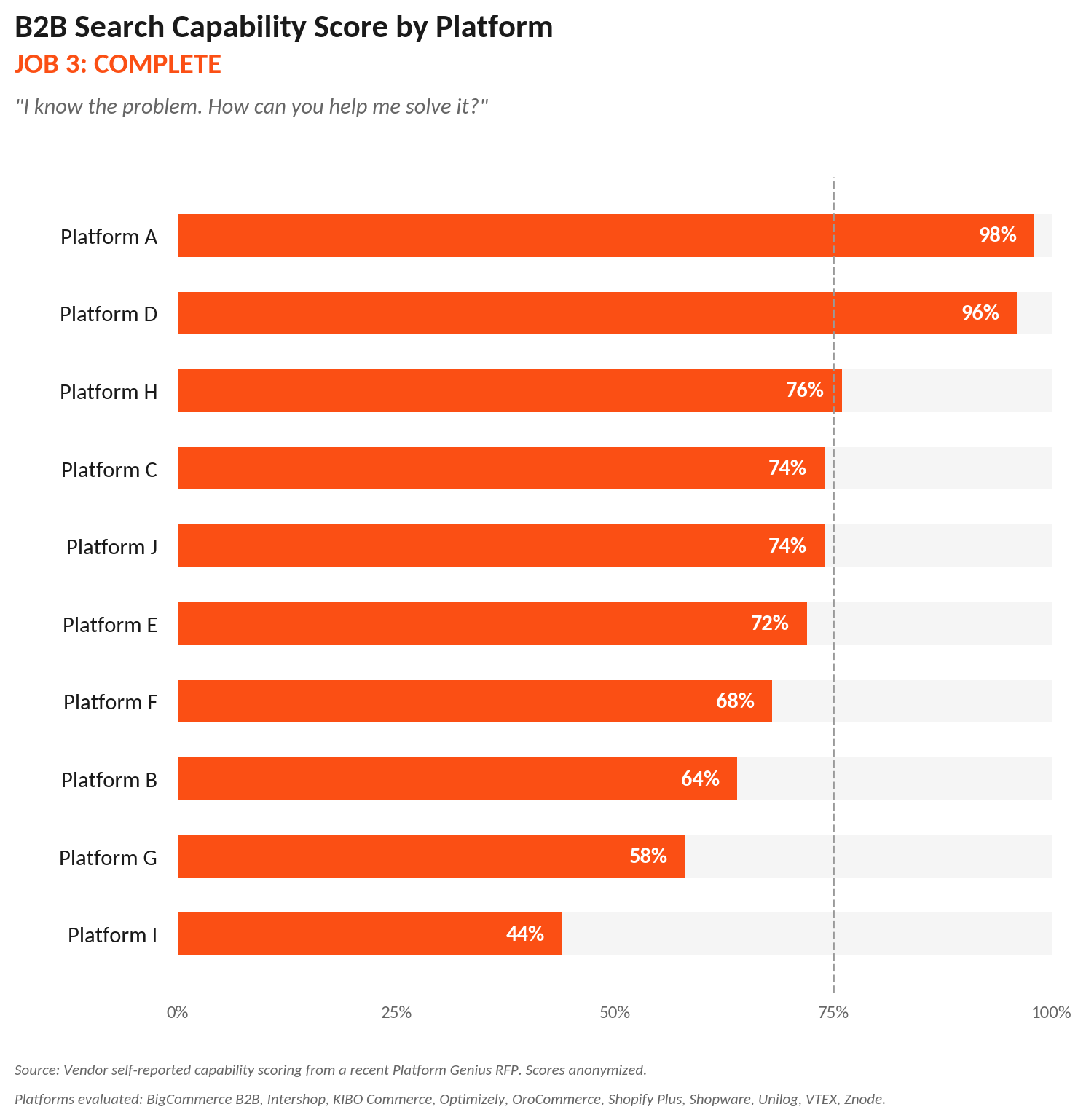

Job 3: Complete

“I know the problem. How can you help me solve it?”

Buyer intent. The buyer specified one thing. Their actual need is bigger than what they typed. They may not even know it.

How they’re searching. They aren’t searching for the additional thing — they’re searching for the original part. The system has to surface what the specification implies: the accessory required to install it, the substitute when it’s out of stock, the cheaper functional equivalent (which has liability implications in regulated B2B), the current version of a discontinued part, the kit or assembly the part belongs to.

What the platform must do. Boost-and-bury based on relevance, sales volume, or merchandising rules. Synonyms that map industry jargon to catalog terms. Search analytics tied to revenue, not just clicks. A/B testing infrastructure. Personalization informed by purchase history and account context.

What the data must support. A catalog with relationships. Parts know about their accessories. Discontinued parts know about their successors. Substitutes know about their compatibility. Kits know their components. This is product information management work, not search work — and it’s where most distributors haven’t even started.

What the scores reveal. Complete has bimodal scoring across the cohort. A few platforms invest heavily in native catalog intelligence; others outsource it almost entirely to partners. The pattern reveals platform philosophy more than platform quality. The hard truth: no amount of search software solves Job 3 if the catalog data isn’t disciplined to begin with. The platform conversation is downstream of the data conversation.

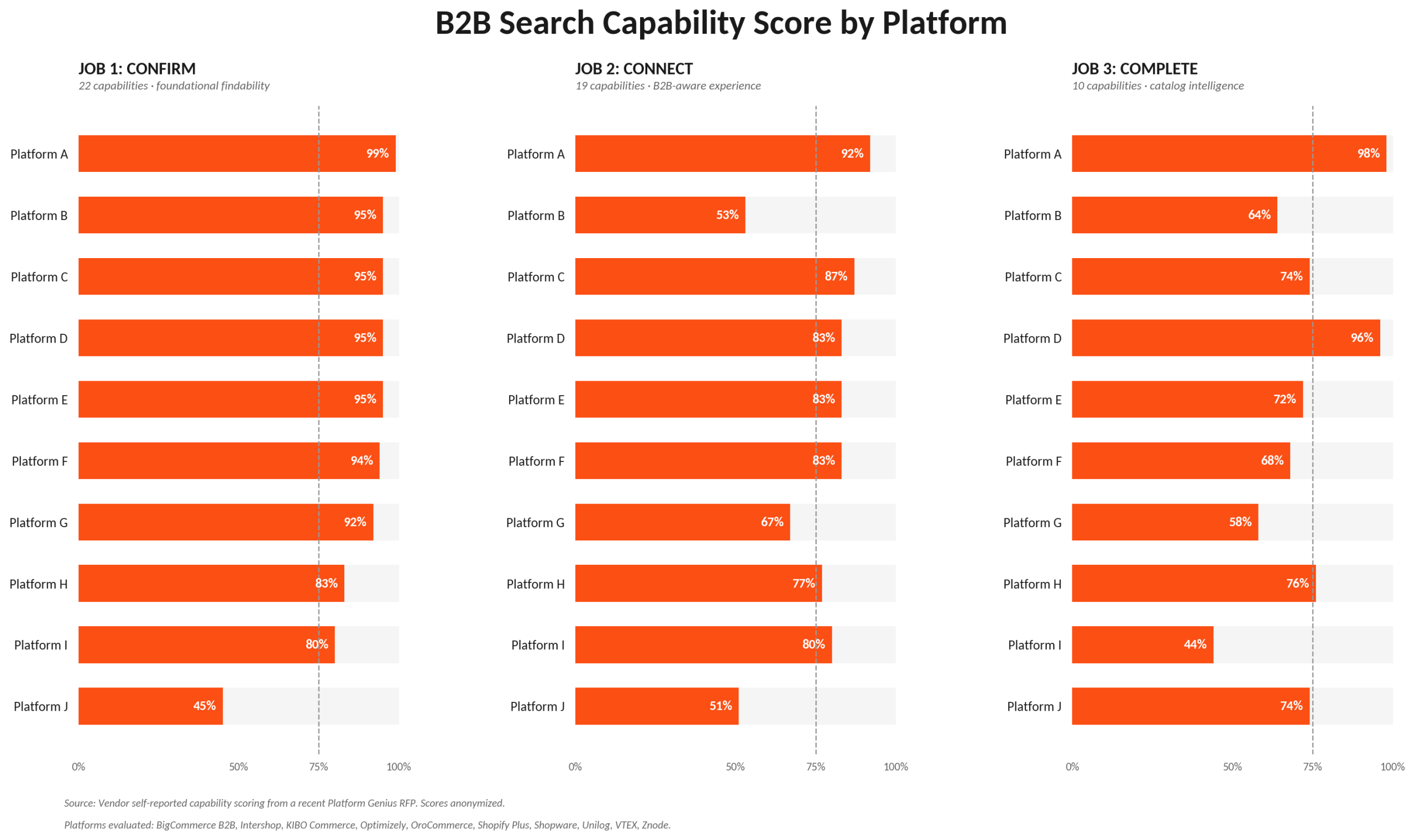

Three Jobs, Three Disciplines

The three jobs test three different things:

- Confirm tests your platform’s search engine.

- Connect tests your platform’s B2B awareness — and your catalog’s vocabulary.

- Complete tests your catalog’s intelligence — the relationships, the structure, the discipline.

Move from one job to the next and the demand shifts from software to architecture to discipline. The platforms that perform well across all three are rare. The platforms that score well on Complete by routing buyers to a third-party search vendor are not the same thing — they’re delivering the capability through a partner ecosystem that costs more, implements longer, and depends on data your team still has to clean.

What to Do With This

If your platform underperforms on Confirm, the fix is platform-level. No amount of merchandising tooling closes a foundational findability gap.

If your platform underperforms on Connect, the fix is partly platform and partly catalog architecture. Customer-specific part numbers, technical attributes, contract pricing — these are catalog problems before they’re search problems.

If your platform underperforms on Complete, ask whether you have the catalog discipline to operate Complete capability before you buy more of it. Buying personalization software without disciplined catalog data is paying for capability you can’t operate.

The maturity curve in B2B search isn’t really about your platform. It’s about your data. The platform either honors that data or it doesn’t.

Source: Vendor self-reported capability scoring from a recent Platform Genius RFP. Scores anonymized.

Platforms evaluated: BigCommerce B2B, Intershop, KIBO Commerce, Optimizely, OroCommerce, Shopify Plus, Shopware, Unilog, VTEX, Znode.merce, Optimizely, OroCommerce, Shopify Plus, Shopware, Unilog, VTEX, Znode.

0 Comments